Overview

The Werne-NWRA Triple Code is a highly accurate

pseudo-spectral fluid-dynamics solver designed to run efficiently on modern

massively parallel supercomputer platforms. Triple simulates the Navier-Stokes

equations in the Boussinesq approximation (i.e., filtering out sound waves),

using either Direct Numerical Simulation (DNS) or Large-Eddy Simulation (LES)

techniques. Triple development started in 1992 as part of an NSF Grand Challenge

Applications Group (GCAG) for Geophysical and Astrophysical Fluid Dynamics.

It was designed and written by

Dr. Joe Werne,

and it has benefited through collaboration with contributions from

Prof. Keith Julien,

Dr. Michael Gourlay, and

Dr. Tom Lund.

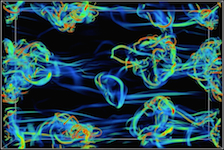

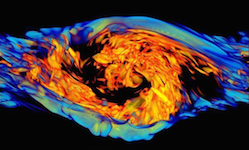

Triple has been used to address a range of research applications, including turbulent shear (i.e.,

Kelvin-Helmholtz) instability, monochromatic gravity-wave breaking, multi-scale

gravity-wave dynamics, rotating and non-rotating turbulent convection, aircraft

wake-vortex dynamics under varied conditions, and high-altitude bore generation

in thermal and Doppler ducts. Modified versions of Triple have been developed

to simulate

acoustic wave propagation in inhomogeneous media

and an

asymptotically reduced set of equations derived for rapidly rotating convection.

The current version of Triple is 4.0.1. The 3.2.0 version of the NWRA Triple Code has

been incorrectly referenced as

GATS-DNS or

Ling Wang's DNS.

A summary of the code version

attributes is found below under

Version History.

Heritage

Triple development started in 1992 while Dr. Werne participated in one of the very

first NSF Grand Challenge projects. Triple's algorithms and data layout have been

updated over time to keep pace with evolving supercomputer design, spanning highly

vectorized, modestly parallel, massively parallel distributed-memory, and modern

heterogeneous massively parallel architectures. To date it has served as the workhorse

code for seven different NSF, NASA, and DoD Grand Challenge, and three DoD Capability

Application Projects (CAP), racking up over 100 million CPU hours of use modeling a

wide range of problems.

Over it's history, Triple has been ported to a large number of different systems,

including Cray (XC30, XE6, XT5, XT4, XT3, T3E, T3D, C90, YMP), IBM (Blue Gene/L, P6,

P5+, P4+, SP), SGI (Altix, O3k, O2k), Intel (Xeon Cluster), and Compaq (SC40/45)

machines. In many of these cases, not only did the compute platforms differ, but

so did the queuing system, the associated archival storage system and software, the

local data-storage hardware and software, as well as the interconnecting network.

After all, many of these machines resided at different supercomputing centers and

were operated by different agencies.

To handle porting to so many different systems, Dr. Werne developed and

implemented a software translation-layer strategy that allows Triple's code and

supporting scripts to run unaltered on all of these platforms. The translation-layer

approach was formalized and further developed by Dr. Werne, Dr. Michael Gourlay,

and

Dr. Chris Bizon into the

Practical Supercomputing Toolkit (PST),

which was sponsored by the DoD High Performance Computing and Modernization Office

(HPCMO) and provided to it's 5000-member user base.

Educational Outreach

Triple includes sophisticated

job-preparation and automation scripts

that dramatically simplify it's use, even for first-time users who have never

accessed a supercomputer before. Taking advantage of this capability, Dr. Werne has

successfully led students through immersive, hands-on training programs in which

research-level problems were solved by teams of students on a

National Center for Atmospheric Research (NCAR)

supercomputer in a time-limited (e.g., two-week) workshop setting. After receiving

lectures from Dr. Werne on the phenomena being studied (e.g., monochromatic gravity-wave

breaking or Kelvin-Helmholtz instability), student teams selected problems to simulate,

ran Triple for the parameter values they chose, used provided postprocessing routines

to analyze Triple's output, then wrote reports presenting their results.

The first student workshop of this sort was conducted as part of the

NCAR 2008 Theme of the Year Summer School.

Example notes on Triple's use provided to the students can be found

here.

One of the NCAR Theme of the Year students,

Dr. Daniel Dombroski,

later went on to accept employment at

NWRA,

working with Dr. Werne on a solar physics research project.

Dr. Werne offered a second workshop as part of the

University of Colorado at Boulder's

Fluid Instabilities, Waves, and Turbulence graduate course

ASTR-5410,

taught by

Prof. Juri Toomre.

The final student reports for ASTR-5410 presenting their results obtained with Triple

can be found

here.

Research Using Triple

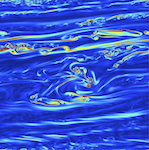

Triple was originally developed to study rotating convection and penetrative

convection in the ocean and the solar interior; this work was sponsored by

NSF and NASA. Triple was later modified by Dr. Werne to study stratified

dynamics, incuding high-Reynolds number Kelvin-Helmholtz instability,

gravity-wave breaking, and multi-scale gravity-wave interactions in Earth's

atmosphere; this work was sponsored by NSF, NASA, and AFRL. These stratified

turbulence simulations were then used to study optical propagation through

the atmosphere, sponsored by AFRL and MDA. Triple has also been applied to

study turbulent wakes behind towed and self-propelled bodies, sponsored by ONR.

Click on the links below for synopses of each of these topics.

Code Description

Algorithm Design:

Triple solves the Navier-Stokes equations in a Cartesian geometry using a pseudo-spectral

algorithm that is formally Nth-order accurate in space (where N is the number of spectral

modes representing a given spatial direction) and 3rd-order accurate in time. Field

variables are represented by either real, discrete Fourier-series or Chebyshev-polynomial

expansions. The Fourier series accurately describe either periodic or bounded fields,

where ``bounded'' refers to Dirichlet or Neumann conditions, which are represented,

respectively, with sine- or cosine-series expansions. For cases employing Chebyshev

polynomials, a primitive-variable formulation is used and tau corrections are applied,

and general boundary conditions are possible.

Time-stepping is carried out in spectral space by advancing the Fourier-series and

Chebyshev-polynomial coefficients. Triple uses the low-storage, mixed implicit/explicit

3rd-order Runge-Kutta scheme of Spalart, Moser, and Rogers (1991), which has storage

requirements typical of most 2nd-order schemes. This permits larger numerical domains than

are possible with most other 3rd-order methods. Triple treats the nonlinear terms

explicitly while advancing the rotation, buoyancy, and diffusion terms implicitly.

Derivatives are computed in spectral space via wavenumber multiplication, while non-linear

terms are computed efficiently in physical space via field-variable multiplication.

Discrete Fourier transforms are used to move between spectral and physical space; a cosine

transform and the Gauss-Lobatto collocation points are used to transform the Chebyshev

series.

Optimization:

Triple's discrete 3D Fast Fourier Transforms (FFTs) account for approximately 75% of its

computational cost. It is important, therefore, for Triple's FFT implementation to be

computationally efficient. To accomplish this Triple exploits the symmetry properties of

real FFTs to realize a significant operation-count reduction compared to complex FFTs.

Also, in order to reuse cache effectively, which is important on modern massively parallel

architectures, Triple's FFTs are conducted in the contiguous (i.e., the first FORTRAN)

array dimension. Transforms in other directions are computed only after suitable transpose

operations are carried out to arrange the transform data contiguously first. Triple also

optimizes its FFT routine for cache reuse by substituting trigonometric multi-angle

recurrence relations for the more traditional table-look-up algorithm.

Most of Triple's inter-processor communication occurs in its global transpose needed for

it's 3D FFTs. The communication pattern for the transpose is organized into a 2D array of

processors, i.e., NCPU = P1 × P2, where communication is confined in these P1 and P2

groups, and optimal values for P1 and P2 depend on both 1) the size and shape of the

numerical domain simulated and 2) the physical layout of the network and processors on

which the calculation is carried out. A benefit of this approach is that very large

numbers of processors may be used efficiently. For example, even a problem with a domain

as small as NX × NY × NZ = 200 × 200 × 200 can be run efficiently on

as many as NCPU = 40,000 processors.

Triple is designed to accommodate massive amounts of disk I/O during production runs.

This is useful for post-processing or visualizing large 3D data sets at a later time

and possibly on a different computing system. To accomplish this Triple employs

1) write-behind data buffering to overlap computation and I/O and 2) a parallel I/O

strategy referred to as Hierarchical Data Structuring, which organizes file operations

into a branching pattern that minimizes operating-system overhead and avoids reliance

on system-parameter specification for which the user may have little or no control. On

many high-performance computing systems, HDS and data buffering combined result in

production runs that execute nearly as fast as tests with 3D I/O disabled.

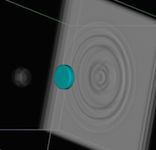

Parallel Performance:

From it's inception, Triple has been optimized for extreme performance, and as machine

architectures have evolved, so has Triple's internal data layout so that it is best

suited for the machines on which it is being run. To date four different data-decomposition

strategies have been employed by Triple as it has followed the history of machine

architetures from vector, to modestly parallel, to distributed memory, and finally to

today's heterogeneous massively parallel designs.

Some History: In 1992, Triple development began on the NCAR Cray YMP, and maximal

vector length provided the greatest performance potential for that machine. As such,

Triple's discrete FFTs were initially computed over its data arrays' last Fortran index,

allowing vectorization over the first two array indices. Then, when porting Triple to the NSF

Pittsburgh Supercomputing Center's (PSC's) newly installed 16-processor Cray C90, Dr. Werne

wrote Triple's 2nd-generation 3D FFT algorithm, which performs

its discrete FFTs over the middle array index, allowing for modest vectorization over the first

index and loop-level parallelization over the last index. Later, when the distributed-memory

500-processor Cray T3D was installed at PSC, Dr. Werne wrote Triple's 3rd-generation 3D FFT

algorithm using Cray's SHMEM communication library, this time performing computations over the

first array index in order to maximize both cache reuse and the number of simultaneous parallel

tasks, which were distributed among the last two array indices. This strategy worked well for

years, but when the number of cores exceeds NCPU=5000, the all-to-all communication pattern

associated with this data layout becomes latency bound, and a more general strategy is needed.

To address this problem, Dr. Werne wrote Triple's current (4th-generation) FFT routine, which

factors communication into two groups, P1 and P2, that send messages separately through the

machine network. Here P1 and P2 are factors of NCPU, i.e., NCPU = P1 × P2, and Triple's

3rd-generation FFT is simply the special case of P1=1. Some of the details of this approach

are described in the

Optimization section above.

|

|

|

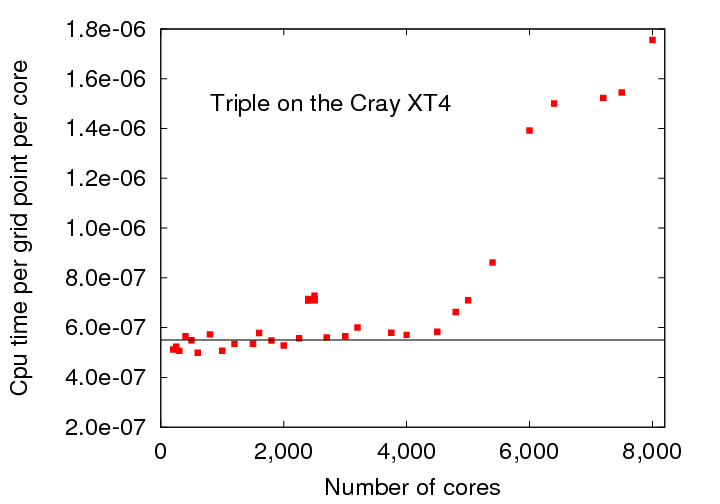

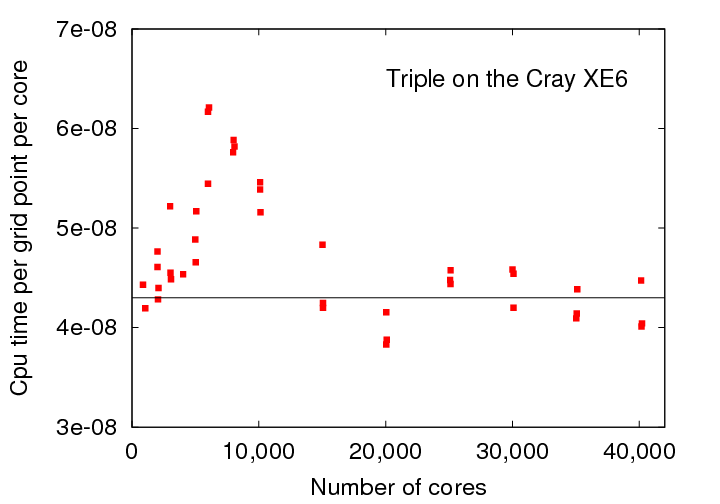

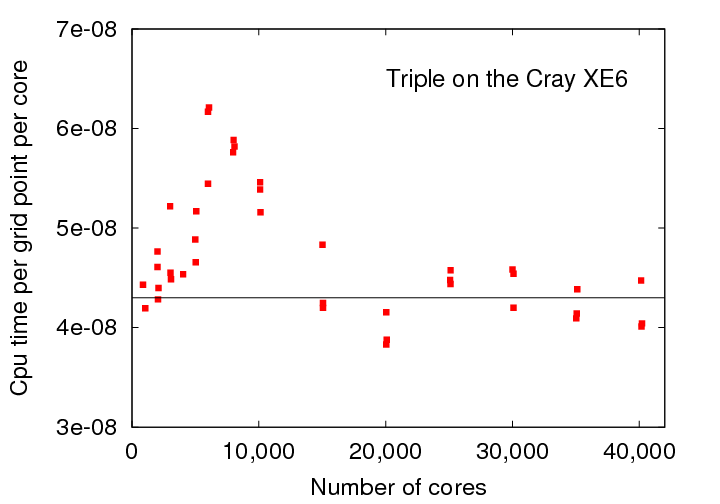

Figure 1: Triple Code parallel performance for (a) simple and (b) factored domain decompositions.

|

Figure 1 shows Triple's parallel performance for its 3rd-generation (left panel) and

4th-generation (right panel) FFTs. The loss of perfect parallel scaling in the left panel

is evident as the per-core execution time rises dramatically for NCPU>5000 on the Cray XT4

at the ARMY Engineer Research and Development Center (ERDC). Likewise, the recovery of

perfect parallel scaling in the right panel is evident when NCPU>15,000 when a factor of P1=16 is

used running on the Air Force Research Laboratory (AFRL) Cray XE6.

Note that Figure 1 demonstrates the two cases P1=1 (left panel) and P1=16 (right panel)

running on two different machines. From the figure we see that the optimal value of P1

depends on NCPU and the machine being used. Triple's 4th-generation FFT offers the

flexibility to select the optimal value of P1, regardless the machine or the number of

cores used.

Note, though Triple's inter-processor communication was initially implemented using the

Cray SHMEM message-passing library on the Cray T3D, it was later modified to allow the

option of either SHMEM or MPI (Message Passing Interface) communication libraries when

Triple was ported to IBM platforms. This option remains available today, though SHMEM

is becoming less available.

Job-Preparation and Automation:

Significant challenges arise from many of the logistical operations associated with

large-scale computing. To address these issues, Dr. Werne developed a modular system

of code and Perl scripts to simplify, streamline, and automate large-scale computing tasks.

Strategies incorporated in Triple include:

1) automating job-script preparation and submission so that users are not subjected to these

repetitive and mindless tasks;

2) defining an irreducible set of job-related parameters for the job-preparation input file

so that redundancy is removed, minimizing potential user error during batch-job specification;

3) migrating large 3D data volumes to archival storage automatically in real time while

a batch job is executing so that impact on local disk space is minimized; and

4) developing a translation layer for predictable tasks so that code can be more easily

maintained at multiple centers and users need not memorize multiple syntaxes to solve

the same problem; these tasks include file transfer operations, queue-system control

directives and parameters, and job-submission syntax. This last set of tools has been

adopted by the Department of Defense as their "Uniform Command Line Interface"; see

www.pstoolkit.org,

home of the "Practical Supercomputer Toolkit (PST)" developed by Dr. Werne. With this suite

of software tools, Dr. Werne and colleagues are able to devote very little time and effort

to time-consuming, repetitive, and mundane tasks, and instead they can concentrate on higher

level scientific tasks. As a result, simulations using Triple benefit from more than highly

efficient and accurate numerical methods. They also benefit from an automated and flexible

system of code that allows for more efficient use of the most valuable resource involved in

high-performance computing: the time of the user carrying out the simulations.

Postprocessing:

A suite of approximately twenty postprocessing routines has been developed to analyze

Triple's output. Energy, momentum, available potential energy, and higher-order budgets

are computed for mean, spanwise-averaged, and fluctuating quantities, totaling more than

500 statistical terms. Probability distributions and their first four moments are also

computed, as are all spectra, 2nd-order structure functions, and 2nd-order structure

tensors. Three-dimensional volume-rendering-visualization data is generated, as are

fixed-position time traces and analyses of Triple's particle-tracer flow-advection data.

All of these routines are carefully written to require no more per-processor memory than

Triple itself, insuring that code-memory needs are always satisfied; i.e., the machine

on which Triple computed a solution is guaranteed to also be able to postprocess the

data Triple generated. Just like Triple, the suite of supporting postprocessing routines

also benefits from automated job-preparation, compilation, and submission, and therefore

modification of these routines to incorporate new analyses is easily facilitated.

Triple Version History:

1.0.0 (07/92) Initial version of Triple code using maximal vector lengths.

1.2.0 (01/93) Second-generation FFT algorithm, new data layout for discrete FFT computation over

middle index, loop-level parallelization over last index, vectorization over 1st index.

1.3.0 (06/94) Third-generation FFT algorithm, new data layout for FFT computation over 1st array

index, parallelization over last two indices. Physical-space partitioned among processors in

X direction. Nyquist mode removed. Cosine-expansion recovery requires recurrence relations.

2.0.0 (04/95) Nyquist mode reintroduced, NCPUT variable introduced.

2.0.1 (11/96) Triple outputs spectra files; spec and associated scripts and code now obsolete.

codeit now reads VERSION file.

2.0.2 (09/99) New version of _ked routines, standardize subroutine calls. Many files affected.

2.0.3 (10/00) Add iprob=23,24. slide operator introduced, adds analytic (exact) mean-flow advection.

2.0.4 (11/00) Add iprob=120,121 to test slide-operator code. islide.ne.1 is known to be buggy.

2.0.5 (06/01) Port to goliat2.ffi.no (SGI O3, openPBS) and gg3.ffi.no (SGI Altix, PBS).

KNOWN BUGS --

- On goliat2, number of processes spawned by each processor is larger than openPBS default,

job is aborted by the queuing system. Jobs can be run interactively only.

- On gg3, the code hangs just before a call to t3e_transp.

- runbackups, runbackups0, readFvol, and sxyz2dat0 all contain bugs.

2.0.6 (12/01) Bugs removed: runbackups, runbackups0, readFvol, sxyz2dat0. runbackups can now override

platform::CANALTERPES. new: makeprog.altix, makespec.altix, makespec1.altix, makeinterp.altix.

restart_t3d_zyx.f now reads restart.tmp. minstep defined for ntimes=2 in triple_zyx.f.

New post-processing routines added. More eigenfunction initialization data added.

2.0.7 (12/04) Port to NAVO's romulus, IBM SP P4+

3.0.0 (03/05) Restart, volume, and planes data consolidated into single files using MPI-IO.

endian-ness checked for input files, all output is now big endian, including the restart file,

making restart files now portable. Reduce dealiased restart data file size. Separate restart

data into separate files. Ensure compatibility with legacy restart data. post-processing

codes must be updated accordingly. Update makefiles to address dependencies. Add example

ezViz (.ini) files. Allow multi-ASF configuration. onefile2D.f now uses MPI-IO. LES enabled.

3.0.1 (04/05) IOMETHOD added, MPI-IO requires compiling with MPI. output combined by post-processing

if not by Triple. Triple now reads only single volume files.

KNOWN BUGS --

- combinem errant if nstep0>nstep2.

3.0.2 (08/06) Subvolumes now written to separate files. $io_ext included in codeit. On-the-fly restart.

3.0.3 (07/08) Port Triple to jade (Cray XT4 at ERDC); disable DEBUG in subtrp_zyx.f

3.1.0 (09/08) Split subroutines out into their own files.

3.2.0 (11/08) Has been incorrectly referenced as GATS-DNS or Ling Wang's DNS

KNOWN BUGS --

- Segmentation-fault and other errors in particle routines.

- Memory-management error discovered in SGS code; produces seg fault.

- Error found in SGS terms; all LES solutions computed to date are incorrect.

- Memory-management error discovered for bore problem.

- Input-parameter specification and checking incomplete.

- Vertical derivative incomplete.

- Segmentation-fault error in particle-advection routines.

- Automated run-time data archiving may be incomplete for some run parameters.

3.2.1 (05/10) Bugs removed from LES routines.

3.2.1-1 (03/13) Bugs removed from particle routines. Job-submission corrections for garnet.erdc

and copper.ors.

3.2.1-2 (02/14) Precompiling enabled.

3.2.2 (10/14) File I/O optimizaed. Bugs removed from particle routines.

3.2.3 (11/14) Email feature added to codeit. Bugs removed from LES and bore problems.

3.2.4 (11/14) Particle code updated; bugs removed.

3.2.5 (12/14) Particle restart enabled.

3.2.6 (01/15) Debug vertical derivatives.

4.0.0 (02/15) Fourth-generation FFT algorithm, factored domain layout.

4.0.1 (04/15) Derivative routines updated for factored domain layout.

Publications Resulting from Triple

- Fritts, Baumgarten, Wan, Werne, Lund 2014: "Quantifying Kelvin-Helmholtz instability dynamics

observed in noctilucent clouds: 2. Modeling and interpretation of observations", J. Geophys. Res.,

Vol 119, pp 9359-9375, doi:10.10 02/2014JD 021833.

- Fritts, Wan, Werne, Lund, Hecht 2014: "Modeling the Implications of Kelvin-Helmholtz Instability

Dynamics for Airglow Observations", J. Geophys. Res., Vol 119, doi:10.1002/2014JD021737.

- Stellmach, Lischper, Julien, Vasil, Cheng, Ribeiro, King, Aurnou 2014:"Approaching the

Asymptotic Regime of Rapidly Rotating Convection: Boundary Layers vs Interior Dynamics",

Phys. Rev. Lett. Vol 113, p 254501.

- Fritts, Wang, Werne 2013: "Gravity Wave-Fine Structure Interactions. Part I: Influences of

Fine Structure Form and Orientation on Flow Evolution and Instability", J. Atmos. Sci. Vol 70(12),

pp 3710-3734, DOI:10.1175/JAS-D-13-055.1

- Laughman, Fritts, Werne, Simkdada and Taylor 2013: "Interpretation of apparent simultaneous

occurrences of Kelvin-Helmholtz instability in two airglow layers: Numerical Modeling", J. Geophys. Res.

(submitted).

- Simkhada, Laughman, Fritts, Werne and Liu 2013: "Interpretation of apparent simultaneous occurrences

of Kelvin-Helmholtz instability in two airglow layers: Observations", J. Geophys., Res. (submitted).

- Simkhada, Laughman, Fritts, Werne and Liu 2013: "Kelvin-Helmholtz instability in two airglow layers:

Observations", J. Geophys., Res. (submitted).

- Laughman, Fritts, Werne, Simkhada, Taylor and Liu 2013: "Coupled small scale mesospheric dynamics

in a dual shear environment over Hawaii II: Modeling and interpretation", J. Geophys. Res. (submitted).

- Fritts, Wang 2013: "Gravity Wave-Fine Structure Interactions. Part II: Energy Dissipation

Evolutions, Statistics, and Implications", J. Atmos. Sci., Vol 70, pp 3735-3755. doi:10.1175/JAS-D-13-059.1.

- Julien, Rubio, Grooms, Knobloch 2012: "Statistical and physical balances in low Rossby number

Rayleigh-Benard convection", Geophys. Astrophys. Fluid Dyn. Vol 106:4-5, pp 392-428, doi:10.1080/03091929.2012.696109

- Laughman, Fritts and Werne 2011: "Comparisons of predicted bore evolutions by the Benjamin-Davis-Ono

and Navier-Stokes equations for idealized mesopause thermal ducts: instability in two airglow layers",

J. Geophys., Res., DOI:10.1029/2010JD014409

- Franke, Mahmoud, Raizada, Wan, Fritts, Lund and Werne 2011: "Computation of clear-air radar

backscatter from numerical simulations of turbulence: 1. Numerical methods and evaluation biases",

J. Geophys. Res., DOI:10.1029/2011JD015895

- Fritts, Franke, Wan, Lund and Werne 2011: "Computation of clear-air radar backscatter from numerical

simulations of turbulence: 2. Backscatter moments throughout the lifecycle of a Kelvin-Helmholtz

instability", J. Geophys. Res., DOI:10.1029/2010JD014618

- Werne, Fritts, Wang, Lund and Wan 2010: "Atmospheric Turbulence Forecasts for Air Force and Missile

Defense Applications", Invited Paper, 20th DoD HPC User Group Conference, 14-17 June, Schaumburg, IL,

DOI:10.1109/HPCMP-UGC.2010.75

- Wroblewski, Werne, Cote, Hacker and Dobosy 2010: "Temperature and velocity structure functions in

the upper troposhere and lower stratosphere from aircraft measurements (invited)", J. Geophys. Res.,

DOI:10.1029/2010JD014618

- Fritts, Wang, Werne, Lund and Wan 2009: "Gravity Wave Instability Dynamics at High Reynolds Numbers.

Part II: Turbulence Evolution, Structure, and Anisotropy", J. Atmos. Sci., DOI:10.1175/2008JAS2727.1

- Fritts, Wang, Werne, Lund and Wan 2009: "Gravity wave instability dynamics at high Reynolds numbers,

1: Wave field evolution at large amplitudes and high frequencies", J. Atmos. Sci. Vol 66, pp 1126-1148,

doi:10.1175/2008JAS2726.1.

- Fritts, Wang, Werne, Lund and Wan 2009: "Gravity wave instability dynamics at high Reynolds numbers,

2: Turbulence evolution, structure, and anisotropy", J. Atmos. Sci. 661149-1171, doi:10.1175/2008JAS2727.1.

- Werne, Fritts, Wang, Lund and Wan 2009: "High-Resolution Simulations of Internal Gravity-Wave Fine

Structure Interactions and Implications for Atmospheric Turbulence Forecasting", 19th DoD HPC User Group

Conference, 15-19 June, San Diego, CA, DOI: 10.1109/HPCMP-UGC.2009.43

- Fritts, Wang and Werne 2009: "Gravity wave fine-structure interactions: A reservoir of small-scale

and large-scale turbulence energy", Geophys. Res. Lett. Vol 36, L19805, doi:10.1029/2009GL039501.

- Fritts, Laughman, Werne, Simkhada and Taylor 2009: "Numerical simulation of the linking of

Kelvin-Helmholtz instabilities at adjacent shear layers", J. Geophys., Res. (to be submitted).

- Laughman, Fritts and Werne 2009: "Numerical simulation of bore generation and morphology in thermal

and Doppler ducts", Ann. Geophys., SpreadFEx special issue, Vol 27, pp 511-523.

- Werne, Fritts, Wang, Lund and Wan 2008: "High-Resolution Simulations and Atmospheric Turbulence

Forecating", 18th DoD HPC User Group Conference, July, Seattle, WA.

- Ruggiero, Mahalov, Nichols, Werne and Wroblewski 2007: "Characterization of High Altitude Turbulence

for Air Force Platforms", 17th DoD HPC User Group Conference, June, Pittsburgh, PA., DOI:10.1109/HPCMP-UGC.2007.15

- Fritts, Vadas, Wan and Werne 2006: "Mean and variable forcing of the middle atmosphere by

gravity waves", J. Atmos. Solar-Terres. Phys., Vol 68, pp 247-265.

- Ruggiero, Werne, Mahalov, Nichols and Wroblewski 2006: "Characterization of High Altitude

Turbulence for Air Force Platforms", 16th DoD HPC User Group Conference, June, Denver, CO.

-

Julien, Knobloch, Milliff, Werne 2006: "Generalized quasi-geostrophy for spatially

anisotropic rotationally constrained flows", J. Fluid Mech., Vol 555, pp 233-274,

doi:10.1017/S0022112006008949.

-

Peterson, Julien, Weiss 2006: "Vortex cores, strain cells, and filaments in

quasigeostrophic turbulence", Phys of Fluids, Vol 18, p 026601, doi:10.1063/1.2166452.

-

Sprague, Julien, Knobloch and Werne 2006: "Numerical simulation of an asymptotically

reduced system for rotationally constrained convection", J. Fluid Mech., Vol 551, pp 141-174,

doi:10.1017/S0022112005008499.

-

Werne, Lund, Pettersson-Reif, Sullivan & Fritts, 2005: "CAP Phase II

Simulations for the Air Force HEL-JTO Project: Atmospheric Turbulence

Simulations on NAVO's 3000-Processor IBM P4+ and ARL's 2000-Processor

Intel Xeon EM64T Cluster", 15th DoD HPC User Group Conf. June 2005,

Nashville, DOI:10.1109/DODUGC.2005.16

-

Kelley, Chen, Beland, Woodman, Chau & Werne, 2005: "Persistence of a

Kelvin-Helmholtz Instability Complex in the Upper Troposphere",

J. Geophys. Res. Vol 110, D14106, doi:10.1029/2004JD005345

- Ruggiero, Werne, Lund, Fritts, Wan, Wang, Mahalov and Nichols 2005: "Characterization of high altitude

turbulence for Air Force platforms", 15th DoD HPC User Group Conference, June, Nashville, TN.

-

Werne, Birch & Julien, 2004: "The Need for Control Experiments in Local

Helioseismology", SOHO 14/GONG 2004, Helio- and Asteroseismology: Towards

a Golden Future, New Haven, CT

- Helgeland, Andreassen, Ommundsen, Pettersson-Reif, Werne, Gaarder 2004: "Visualization of the

Energy-Containing Turbulent Scales", 2004 IEEE Symposium on Volume Visualization and Graphics (VV'04)

103-109., DOI:10.1109/SVVG.2004.15

- Fritts, Bizon, Werne and Meyer 2003: "Layering accompanying turbulence generation due to shear

instability and gravity wave breaking", J. Geophys. Res. Vol 108, D8, p 8452, doi:10.1029/2002JD002406.

- Fritts, Gourlay, Orlando, Meyer, Werne and Lund 2003: "Numerical simulation of late wakes

in stratified and sheard flows", 13th DoD HPC User Group Conference, DOI:10.1109/DODUGC.2001.1253394

- Dubrulle, Laval, Sullivan and Werne 2002: "A new dynamical subgrid model for the planetary

surface layer. I. The model and a priori tests", J. Atmos. Sci. Vol 59, p 857.

- Pettersson-Reif, Werne, Andreassen, Meyer, Davis-Mansour 2002: "Entrainment-zone restratification

and flow structures in stratified shear turbulence", Studying Turbulence Using Numerical

Simulation Databases -IX, Proceedings of the 2002 Summer Program, Center for Turbulence Research,

ed. P. Bradshaw, pp 245-256.

- Legg, Julien,

McWilliams and Werne 2001: "Vertical transport by convection plumes: Modificiation by rotation",

Phys. Chem. of the Earth, B, Vol 26 (4), pp 259-262.

- Gourlay, Arendt, Fritts and Werne 2001: "Numerical modeling of initially turbulent wakes

with net momentum", Phys. Fluids Vol 13, p 3783.

- Garten, Werne, Fritts and Arendt 2001: "Direct numerical simulations of the Crow instability

and subsequent vortex reconnection in a stratified fluid", J. Fluid Mech. Vol 426, p 1.

- Werne, Bizon, Meyer and Fritts 2001: "Wave-breaking and shear turbulence simulations in

support of the Airborne Laser", 11th DoD HPC User Group Conference, June, Biloxi, MS.

-

Werne & Fritts, 2000: "Structure Functions in Stratified Shear

Turbulence", DoD HPC User Group Conference, Albuquerque, NM

-

Werne & Fritts, 2000: "Anisotropy in a stratified shear layer",

Physics and Chemistry of the Earth, Vol 26, p 263

-

Fritts & Werne, 2000: "Turbulence Dynamics and Mixing due to Gravity

Waves in the Lower and Middle Atmosphere" in Atmospheric Science

across the Stratopause, Geophysical Monograph 123, American Geophys.

Union, 143-159

-

Gibson-Wilde, Werne, Fritts & Hill 2000: "Direct numerical simulation of

VHF radar measurements of turbulence in the mesosphere", Radio Science,

Vol 35, p 783.

-

Werne, Adams & Sanders 2000: "Hierarchical Data Structure and Massively

Parallel I/O", Parallel Computing.

- Werne, Adams and Sanders 2000: "Hierarchical Data Structuring: an MPP I/O How-to", Scientific

Computing at NPACI, June 14, Vol 4 Issue 12.

- Werne, Adams and Sanders 2000: "Linear scaling during production runs: conquering the I/O

bottleneck", in ARSC CRAY T3E Users' Group Newsletter 193, April 14, eds. T. Baring and G. Robinson.

- Gourlay, Arendt, Fritts and Werne 2000: "Numerical modeling of turbulent zero momentum late

wakes in density stratified fluids", 10th DoD HPC User Group Conference, June 5-9, Albuquerque, NM.

- Gourlay, Arendt, Fritts and Werne 2000: "Numerical modeling of turbulent non-zero momentum

late wakes in density stratified fluids", Fifth International Sypmosium on Stratified Flows,

July 10-13, Vancouver, Canada.

- Gibson-Wilde, Werne, Fritts and Hill 2000: "Application of turbulence simulations to

the mesosphere", Proc. MST 9 Radar Workshop, Toulouse, France.

-

Julien, Legg, McWilliams and Werne 1999: "Plumes in rotating convection: Part 1.

Ensemble statistics and dynamical balances", J. Fluid Mech. Vol 391, pp 151-187.

-

Werne & Fritts, 1999: "Anisotropy in Stratified Shear Turbulence",

DoD HPC User Group Conference, Monterey, CA

-

Werne & Fritts, 1999: "Stratified shear turbulence: Evolution and

statistics", GRL, Vol 26, p 439.

-

Hill, Gibson-Wilde, Werne & Fritts 1999: "Turbulence-induced fluctuations

in ionization and application to PMSE", Earth Planets Space,

Vol 51, p 499.

-

Julien, Knobloch and Werne 1999: "Reduced Equations for Rotationally Constrained

Convection", In the International Symposium on Turbulence and Shear Flow Phenomena,

Begel House, Vol 1, pp. 101-106.

- Garten, Werne, Fritts and Arendt 1999: "The Effects of Ambient Stratification on the

Crow Instability and Subsequent Vortex Reconnection" in European Series in Applied and

Industrial Mathematics, ESAIM Proceedings, Third International Workshop on Vortex Flow and

Related Numerical Methods, Vol 7 Eds: A. Giovannini, G. H. Cottet, Y. Gagnon, A. Ghoniem, E. Meiburg.

-

Julien, Knobloch and Werne 1998: "A new class of equations for rotationally constrained

flows", Theoretical and Computational Fluid Dynamics, Vol 11, pp 251-261.

-

Julien, Knobloch and Werne 1998: "A Reduced Description for Rapidly Rotating Turbulent Convection",

In Advances in Turbulence VII, Eds U. Frisch, pp 472-482, Klumer Academic Publishers.

- Werne 1998: "Comment on 'There is no Error in the Kleiser-Schumann

Influence-Matrix Method'", J. Comput. Phys. Vol 141, p 88.

- Werne and Fritts 1998: "Turbulence in Stratified and Shear Fluids: T3E

Simulations", 8th DoD HPC User Group Conference, Houston, TX.

- Garten, Arendt, Fritts and Werne 1998: "Dynamics of counter-rotating vortex pairs in

stratified and sheared environments", J. Fluid Mech. Vol 361, p 189-236.

-

Bizon, Predtechensky, Werne, Julien, McCormick, Swift, and Swinney 1997: "Dynamics and

Scaling in Quasi Two-Dimneionsal Turbulent Convection", Physica A., Vol 239, p 204.

-

Bizon, Werne, Predtechensky, Julien, McCormick, Swift and Swinney 1997: "Plume

Dynamics in Quasi 2D Turbulent Convection", Chaos, Vol 7, pp 107-124.

-

Julien, Werne, Legg and McWilliams 1997: "The effects of rotation on the global dynamics of

turbulent convection", in SCORe'96: Solar Convection and Oscillations and their Relationship.

Eds. J. Christensen-Dalsgaasrd and F. P. Pijpers. Kluwer Academic Publ., pp 227-230.

-

Werne 1996: "Turbulent convection: what has rotation taught us?", in

Geophysical and Astrophysical Convection, Eds Fox and Kerr, Gordon Breach,

221.

-

Julien, Legg, McWilliams, and Werne 1996: "Rapidly Rotating Turbulent Rayleigh-Benard

Convection", J. Fluid Mech., Vol 322, pp 243-273.

-

Julien, Legg, McWilliams, and Werne 1996: "Hard turbulence in rotating

Rayleigh-Benard convection", Phys. Rev. E, Vol 53, pp R5557-R5560.

-

Julien, Legg, McWilliams, and Werne 1996: "Penetrative Convection in Rapidly

Rotating Flows: Preliminary Results from Numerical Simulation", Dyn. Atmos.

Oceans, Vol 24, p 237-249.

-

Julien, Werne, Legg and McWilliams 1996: "The effect of rotation on convective overshoot"

in SCORe'96: Solar Convection and Oscillations and their Relationships. Eds. J.

Christensen-Dalsgaasrd and F. P. Pijpers. Kluwer Academic Publ., pp 231-234.

-

Werne 1995: "Turbulent Rotating Rayleigh-Benard Convection with Comments

on 2/7", Woods Hole Oceanog. Inst. Tech. Rept. WHOI-95-27.

- Werne 1995: "Incompressibility and No-Slip Boundaries in the Chebyshev-Tau

Approximation: Correction to Kleiser and Schumann's Influence-Matrix Solution",

J. Comput. Phys., Vol 120, p 260.